How do you measure user experience quality?

This week I attended a small workshop led by Warwick Bracken, about managing user experience from his role with a large consulting firm in Melbourne.

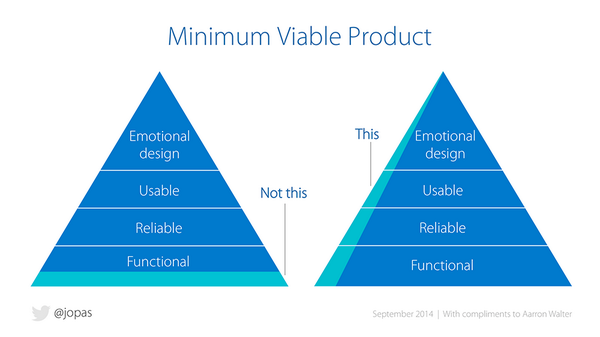

One of the key concepts Warwick brought up was that all products (or features) can be measured on an increasing scale of "quality", where the minimum is a functional product, and "higher quality" versions of that product would become reliable, usable, and pleasurable in that order. This concept was used in the context of setting UX attention / resources when building a product, where commonly an extra 2 weeks may push a lower quality "bronze" product to "silver" and hopefully "gold" standard.

It was similar to this:

(credit: image from Jussi Pasanen's tweet)

However a HUGE question kept bugging me - how do you measure the quality of user experience?

Warwick proposed a task-oriented measure of quality, something like:

- a "bronze" product may take 2 weeks and include tasks such as wireframes, and design mockups

- a "silver" product may take 3 weeks and include wireframes, design mockups, and in-person user testing

- a "gold" product may take 6 weeks and include all of the above, plus another round with a high-fidelity prototype, focus groups, and so on.

The actual tasks listed here don't matter, but the underlying assumption is that more time = more "tasks" completed = better quality. Does this assumption hold true? In theory I would like to think so, but in practice I suspect it does not (not always anyway).

Another participant proposed a customer-oriented measure of quality, namely measuring Net Promoter Score as a proxy for quality. My gut says this is a "truer" measure of user experience quality, although it may require too long of a timeframe to be much use within an everyday, operational practice.

So... the question of how to measure user experience quality still bothers me. I suspect that it would not be possible to have a completely objective metric that would apply in all scenarios, there is too much "art" in crafting a user experience to distil it purely into a set of numbers.

However I think that some indicators of quality could be objectively assessed in a framework of some sort. I don't have the answer to this, but will look into it further. Perhaps somebody has proposed some way of measuring similarly subjective fields, like how do you measure the quality of art? Hmmm.